Open Interpreter

48.7k 4.2kWhat is Open Interpreter ?

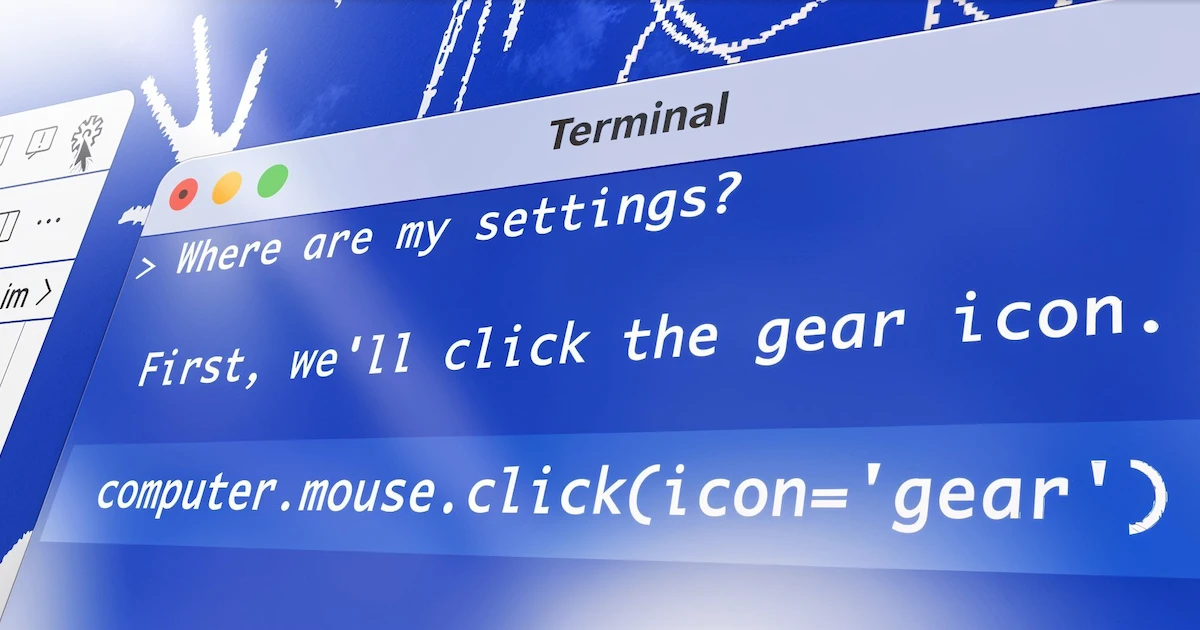

Open Interpreter lets LLMs run code (Python, Javascript, Shell, and more) locally. You can chat with Open Interpreter through a ChatGPT-like interface in your terminal by running $ interpreter after installing.

This provides a natural-language interface to your computer’s general-purpose capabilities:

-

Create and edit photos, videos, PDFs, etc.

-

Control a Chrome browser to perform research

-

Plot, clean, and analyze large datasets

-

…etc.

⚠️ Note: You’ll be asked to approve code before it’s run.

The New Computer Update introduces `--os` and a new Computer API.

Demo

Install Open Interpreter

pip install open-interpreterTerminal

After installation, simply run interpreter :

interpreterPython

from interpreter import interpreter

interpreter.chat("Plot AAPL and META's normalized stock prices") # Executes a single command

interpreter.chat() # Starts an interactive chatComparison to ChatGPT’s Code Interpreter

OpenAI’s release of Code Interpreter with GPT-4 presents a fantastic opportunity to accomplish real-world tasks with ChatGPT.

However, OpenAI’s service is hosted, closed-source, and heavily restricted:

-

No internet access.

-

100 MB maximum upload, 120.0 second runtime limit.

-

State is cleared (along with any generated files or links) when the environment dies.

Open Interpreter overcomes these limitations by running in your local environment. It has full access to the internet, isn’t restricted by time or file size, and can utilize any package or library.

This combines the power of GPT-4’s Code Interpreter with the flexibility of your local development environment.

Commands

Update: The Generator Update (0.1.5) introduced streaming:

message = "What operating system are we on?"

for chunk in interpreter.chat(message, display=False, stream=True):

print(chunk)Interactive Chat

To start an interactive chat in your terminal, either run interpreter from the command line:

interpreterOr interpreter.chat() from a .py file:

interpreter.chat()You can also stream each chunk:

message = "What operating system are we on?"

for chunk in interpreter.chat(message, display=False, stream=True):

print(chunk)Programmatic Chat

For more precise control, you can pass messages directly to .chat(message) :

interpreter.chat("Add subtitles to all videos in /videos.")

# ... Streams output to your terminal, completes task ...

interpreter.chat("These look great but can you make the subtitles bigger?")

# ...Start a New Chat

In Python, Open Interpreter remembers conversation history. If you want to start fresh, you can reset it:

interpreter.reset()Save and Restore Chats

interpreter.chat() returns a List of messages, which can be used to resume a conversation with interpreter.messages = messages :

messages = interpreter.chat("My name is Killian.") # Save messages to 'messages'

interpreter.reset() # Reset interpreter ("Killian" will be forgotten)

interpreter.messages = messages # Resume chat from 'messages' ("Killian" will be remembered)Customize System Message

You can inspect and configure Open Interpreter’s system message to extend its functionality, modify permissions, or give it more context.

interpreter.system_message += """

Run shell commands with -y so the user doesn't have to confirm them.

"""

print(interpreter.system_message)Change your Language Model

Open Interpreter uses LiteLLM to connect to hosted language models.

You can change the model by setting the model parameter:

interpreter --model gpt-3.5-turbo

interpreter --model claude-2

interpreter --model command-nightlyIn Python, set the model on the object:

interpreter.llm.model = "gpt-3.5-turbo"Find the appropriate “model” string for your language model here.

Running Open Interpreter locally

Terminal

Open Interpreter uses LM Studio to connect to local language models (experimental).

Simply run interpreter in local mode from the command line:

interpreter --localYou will need to run LM Studio in the background.

-

Download https://lmstudio.ai/ then start it.

-

Select a model then click ↓ Download.

-

Click the ↔️ button on the left (below 💬).

-

Select your model at the top, then click Start Server.

Once the server is running, you can begin your conversation with Open Interpreter.

(When you run the command interpreter --local , the steps above will be displayed.)

Note: Local mode sets your

context_windowto 3000, and yourmax_tokensto 1000. If your model has different requirements, set these parameters manually (see below).

Python

Our Python package gives you more control over each setting. To replicate --local and connect to LM Studio, use these settings:

from interpreter import interpreter

interpreter.offline = True # Disables online features like Open Procedures

interpreter.llm.model = "openai/x" # Tells OI to send messages in OpenAI's format

interpreter.llm.api_key = "fake_key" # LiteLLM, which we use to talk to LM Studio, requires this

interpreter.llm.api_base = "http://localhost:1234/v1" # Point this at any OpenAI compatible server

interpreter.chat()Context Window, Max Tokens

You can modify the max_tokens and context_window (in tokens) of locally running models.

For local mode, smaller context windows will use less RAM, so we recommend trying a much shorter window (~1000) if it’s is failing / if it’s slow. Make sure max_tokens is less than context_window .

interpreter --local --max_tokens 1000 --context_window 3000Verbose mode

To help you inspect Open Interpreter we have a --verbose mode for debugging.

You can activate verbose mode by using it’s flag ( interpreter --verbose ), or mid-chat:

$ interpreter

...

> %verbose true <- Turns on verbose mode

> %verbose false <- Turns off verbose modeInteractive Mode Commands

In the interactive mode, you can use the below commands to enhance your experience. Here’s a list of available commands:

Available Commands:

-

%verbose [true/false]: Toggle verbose mode. Without arguments or withtrueitenters verbose mode. With

falseit exits verbose mode. -

%reset: Resets the current session’s conversation. -

%undo: Removes the previous user message and the AI’s response from the message history. -

%tokens [prompt]: (Experimental) Calculate the tokens that will be sent with the next prompt as context and estimate their cost. Optionally calculate the tokens and estimated cost of apromptif one is provided. Relies on LiteLLM’scost_per_token()method for estimated costs. -

%help: Show the help message.

Configuration

Open Interpreter allows you to set default behaviors using a config.yaml file.

This provides a flexible way to configure the interpreter without changing command-line arguments every time.

Run the following command to open the configuration file:

interpreter --configMultiple Configuration Files

Open Interpreter supports multiple config.yaml files, allowing you to easily switch between configurations via the --config_file argument.

Note: --config_file accepts either a file name or a file path. File names will use the default configuration directory, while file paths will use the specified path.

To create or edit a new configuration, run:

interpreter --config --config_file $config_pathTo have Open Interpreter load a specific configuration file run:

interpreter --config_file $config_pathNote: Replace $config_path with the name of or path to your configuration file.

Example

-

Create a new

config.turbo.yamlfile

interpreter --config --config_file config.turbo.yaml-

Edit the

config.turbo.yamlfile to setmodeltogpt-3.5-turbo -

Run Open Interpreter with the

config.turbo.yamlconfiguration

interpreter --config_file config.turbo.yamlSample FastAPI Server

The generator update enables Open Interpreter to be controlled via HTTP REST endpoints:

from fastapi import FastAPI

from fastapi.responses import StreamingResponse

from interpreter import interpreter

app = FastAPI()

@app.get("/chat")

def chat_endpoint(message: str):

def event_stream():

for result in interpreter.chat(message, stream=True):

yield f"data: {result}\n\n"

return StreamingResponse(event_stream(), media_type="text/event-stream")

@app.get("/history")

def history_endpoint():

return interpreter.messagespip install fastapi uvicorn

uvicorn server:app --reloadSafety Notice

Since generated code is executed in your local environment, it can interact with your files and system settings, potentially leading to unexpected outcomes like data loss or security risks.

⚠️ Open Interpreter will ask for user confirmation before executing code.

You can run interpreter -y or set interpreter.auto_run = True to bypass this confirmation, in which case:

-

Be cautious when requesting commands that modify files or system settings.

-

Watch Open Interpreter like a self-driving car, and be prepared to end the process by closing your terminal.

-

Consider running Open Interpreter in a restricted environment like Google Colab or Replit. These environments are more isolated, reducing the risks of executing arbitrary code.

There is experimental support for a safe mode to help mitigate some risks.

How Does it Work?

Open Interpreter equips a function-calling language model with an exec() function, which accepts a language (like “Python” or “JavaScript”) and code to run.

We then stream the model’s messages, code, and your system’s outputs to the terminal as Markdown.